MacBook Neo: Productivity Benchmarks

LLVM compilation benchmarks, local LLM inference, and whether 8GB is enough for developers.

Why Test Developer Workloads?

As an engineer, my instinct is to test software development tools. Not because I think a developer should be buying this laptop, but because it's ultimately a computer, capable of computing, and I want to measure that.

I think the real audience for this machine is students. The question they'll ask is: "is this okay for my programming courses?" And I am often surprised by the assertion that 16GB or more is necessary for these tasks. It was not so long ago that developers were building software with much less.

Modern Electron-based IDEs like VS Code still run just fine on 8GB of RAM. A relatively heavy Node application, or a server and frontend combo, shouldn't be a showstopper. Where things get impractical is when you factor in multiple IDEs open simultaneously, larger containerised stacks, or microk8s. 8GB will feel cramped. But honestly, if that's your use case you already know this machine won't cut it. You don't need me to tell you that.

For a student dipping their toes into programming, making the odd Python script or building a Node.js application, the MacBook Neo won't be a showstopper.

To prove that, I need a compilation benchmark that's heavy enough to be meaningful.

LLVM Compilation

To stress-test the Neo's CPU in a real-world compilation scenario, I'm using the Timed LLVM Compilation benchmark from the Phoronix Test Suite. It measures how long it takes to build the LLVM compiler stack from source.

LLVM is a heavy project to compile. It's incredibly CPU intensive with lots of C++ templates, aggressive inlining, and big headers with deep include chains. It's far heavier than the typical web backend or SaaS project, but it's not quite WebKit or Chromium levels of heft and has comparatively few external dependencies. A good middle ground.

Timed LLVM Compilation

Lower is better. MacBook Neo: 1604s (4% deviation, n=9). Reference results from OpenBenchmarking.org (March 2026).

The Core Ultra 258V is an ultra-low-voltage processor found in premium thin-and-light Windows laptops of a similar footprint to the Neo, like the Lenovo ThinkPad X1 Carbon Gen 13 or HP EliteBook Ultra G1i, machines that typically cost two to three times more. The Neo's compile time of roughly 26 minutes is competitive with these chips, finishing just ahead of the 258V.

I wouldn't want to use this as an engineering daily driver, but the results tell a story when you compare them to the Ryzen 7 4700U and Core i7-1185G7, both common in secondhand ThinkPads that still trade for a few hundred pounds. The Neo matches or beats machines that were considered perfectly capable development laptops not long ago, and it does so at a fraction of the power draw.

Local LLM Inference (LMStudio)

Stretching the limits of what this machine should rationally be doing, I ran two local models via LMStudio to see what's possible on 8GB of unified memory.

Both models were loaded in MLX format, which is better optimised for Apple Silicon than GGUF as it can leverage the Metal framework for inference directly.

The prompt given to both models was:

"If Apple were to release a budget laptop leveraging the chip of the iPhone 16 Pro, do you think it would be a functional laptop with reasonable performance for every day tasks? What specification do you anticipate the laptop might have?"

LFM 2.5 1.2B (Liquid AI)

LFM 2.5 is a model designed by Liquid AI specifically for on-device deployment. At only 1.2 billion parameters it has a tiny footprint and is a good candidate for testing whether the Neo can handle inference at all.

LFM 2.5 1.2B MLX — Inference Results

| Metric | Value |

|---|---|

| Time to First Token | 336 ms |

| Inference Speed | 37.68 tok/s |

| Input Tokens | 55 |

| Output Tokens | 506 |

MLX format, LMStudio. Single inference run.

37 tokens per second is genuinely usable for a model this size. Time to first token was snappy at 336ms. For lightweight summarisation or drafting tasks with a small model, the Neo handles it fine.

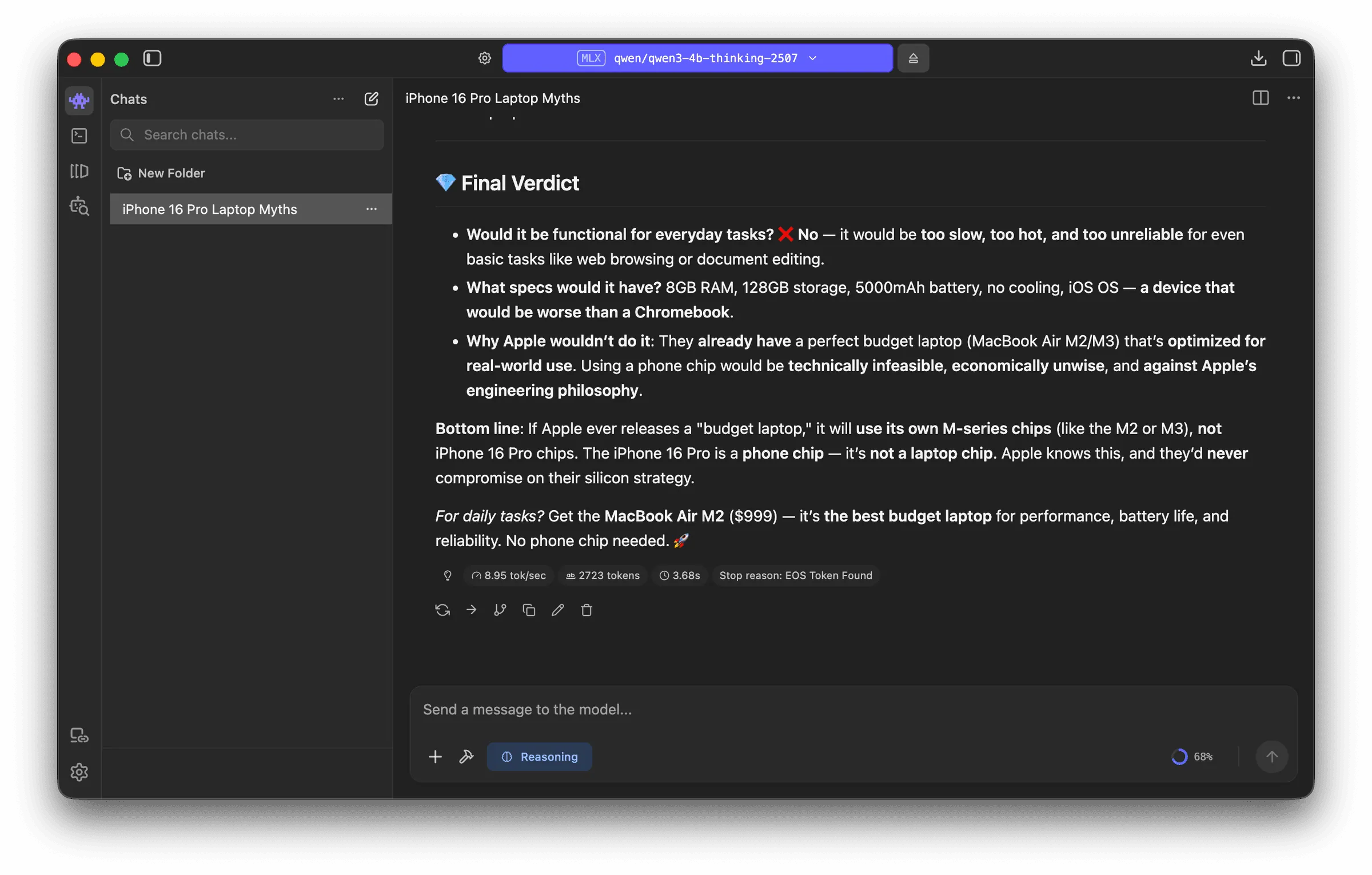

Qwen 4B Thinking (2507, 8-bit MLX)

Stepping up to a 4 billion parameter reasoning model (8-bit quantisation) paints a very different picture.

Qwen 4B Thinking 8-bit MLX — Inference Results

| Metric | Value |

|---|---|

| Time to First Token | 3,680 ms |

| Inference Speed | 8.95 tok/s |

| Input Tokens | 55 |

| Output Tokens | 2,723 |

MLX 8-bit quant, LMStudio. Single inference run.

Under 9 tokens per second with a near 4 second wait for the first token. The laptop was noticeably unresponsive during generation. This would be too slow for daily use, though the 4-bit quant would likely be more practical.

As a hilarious aside, the response from Qwen indicated that this very laptop would be a terrible idea and that Apple would never do it.

Inference Telemetry

Power and CPU usage during both inference runs, captured via powermetrics.

LMStudio Inference — System Telemetry

Sampled via powermetrics at ~1s intervals during LMStudio inference.

The fact that local LLM inference works at all on a machine with an iPhone chip and 8GB of RAM is impressive. It bodes well for a potential A19 Pro-based Neo with an extra 4GB of memory and a slight spec bump in the future. But for now, if local models are part of your workflow, this isn't the machine for you.

Memory Pressure

With only 8GB, swap usage becomes a factor during heavier workloads. macOS manages memory aggressively and the SSD is fast enough that light swap doesn't feel catastrophic, but it's not invisible either.